Looking back at gaming when it was barely standing, you would be surprised at how weird some practices were. One of these practices throughout the gaming world was related to the end-game credits.

There are many reasons developers were not shown by name at the end of their games. Game development was a scarce skill, and companies did not want to lose their developers. Some companies felt it more natural to let their developers use a screen handle rather than their actual name.

Then some developers used their handles, even if they were allowed to use their actual names for the credits. Many companies have left this practice behind in today’s gaming industry, but according to some sources, Japan has still stuck to the old ways.

Developers Credit

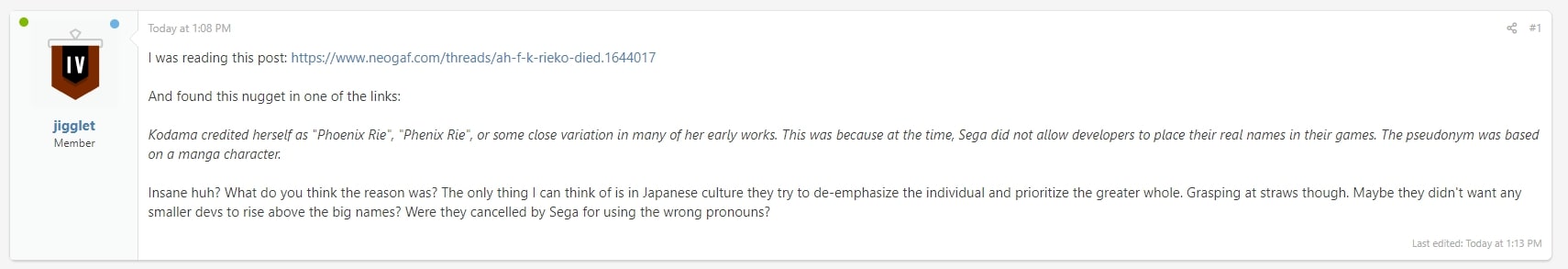

We at TopTierList found a post made by the user jigglet, which spotted this fun little fact about companies. In their post, they list some theories as to why some Japanese companies stuck to these practices.

Of course, some of the theories are listed as jokes, but this did raise a good question in the community. Why did some Japanese companies restrict their developers from not crediting themselves properly?

Some users did list more theories as to why this may be in the same thread. We will discuss some of these theories that make the most sense. However, before we dive into these theories, there is one fact that we must first cover.

Some developers liked to use their screen handles instead of their names, even if they were allowed to. This could be a gaming trend that wasn’t discussed among players of the trade, just the developers.

The Gist of it All

- Most gaming companies did not let developers credit themselves by name for their games.

- Some developers preferred to use their screen handles to credit themselves.

- The most popular theory regarding this is that it was because of poaching.

Poaching

According to many gamers and developers from the 80s and 90s, companies resorted to this tactic to destroy any chance of poaching. Poaching is just companies hiring talented developers from another company. They mostly did this to destroy the other company or use their talent in their games.

This is a pretty bad practice, but it is understandable, considering how scarce the game development talent was back then. In addition, most Japanese gaming companies don’t hire devs for one or two projects. Their hiring contracts are mostly for life.

Poaching is not the only contributing factor to this discrediting theme. However, it is the most likely contributing factor among most theories. This applies both ways; however, companies that did poach developers did not want others to know that they did poach, which protected both the company and the developer.

This countermeasure against poaching indirectly started a pretty cool game development trend where some developers started to use their gamer tag more often than not. Gamer handles were extremely important in that era, mainly because all of a gamer’s worth was under their gamer tag.

Other Factors

Among other factors as to why this was such a huge problem is that game development was a poorly compensated field. Tech was starting out, and games were nowhere near as mainstream apart from the arcade machines.

Poaching became a huge problem because of the poor compensation that forced developers to work on other projects as well. Aside from poor compensation, the Talent Drain was also a massive problem.

Resources were limited, and so were the developers, which ultimately led to this whole fiasco of Japanese Companies not letting developers credit themselves.

Ending Note

Ultimately, the era of microcomputers came about, and game developers started sprouting like rock stars. Japan still stayed behind that curve and still held its tradition. But, if we look at some of the earlier developers now, they are doing just fine.

Finally, the time is here to conclude his article so let me know what you think. Additionally, why do you think some companies did not allow game devs to credit themselves in their games properly? Take care gamers and game developers.